by dbward | Jul 18, 2022 | Innovation Coaching

I recently came across a question that’s been bouncing around in my head ever since: “What does it take to maintain that?”

It’s a good question to ask about anything you come across, from a building or a bridge or a website to a process or a cultural norm or a high level of fitness. It’s particularly important to ask it about anything YOU are going to make, initiate, or otherwise launch.

While innovation tends to focus on making new stuff (i.e. the Novelty piece of our innovation definition), if we’re going to have real impact over the long term, we’ve got to pay attention to maintenance. We’ve got to plan for it. Thus, the question.

And for those with a strong appetite for novelty, the good news is that maintenance has an element of newness in it as well. As the British writer G.K. Chesterton put it: If you leave a white post alone it will soon be a black post. If you particularly want it to be white you must be always painting it again; that is, you must be always having a revolution. Briefly, if you want the old white post you must have a new white post.

So… I encourage you to keep this question in mind as you go about doing innovation-y stuff, making and fielding & offering new products & services. I also encourage you to find the revolution in maintenance, as you turn the old white post into the new white post.

Photo credit Wikimedia Commons

by dbward | Feb 7, 2022 | Innovation Coaching

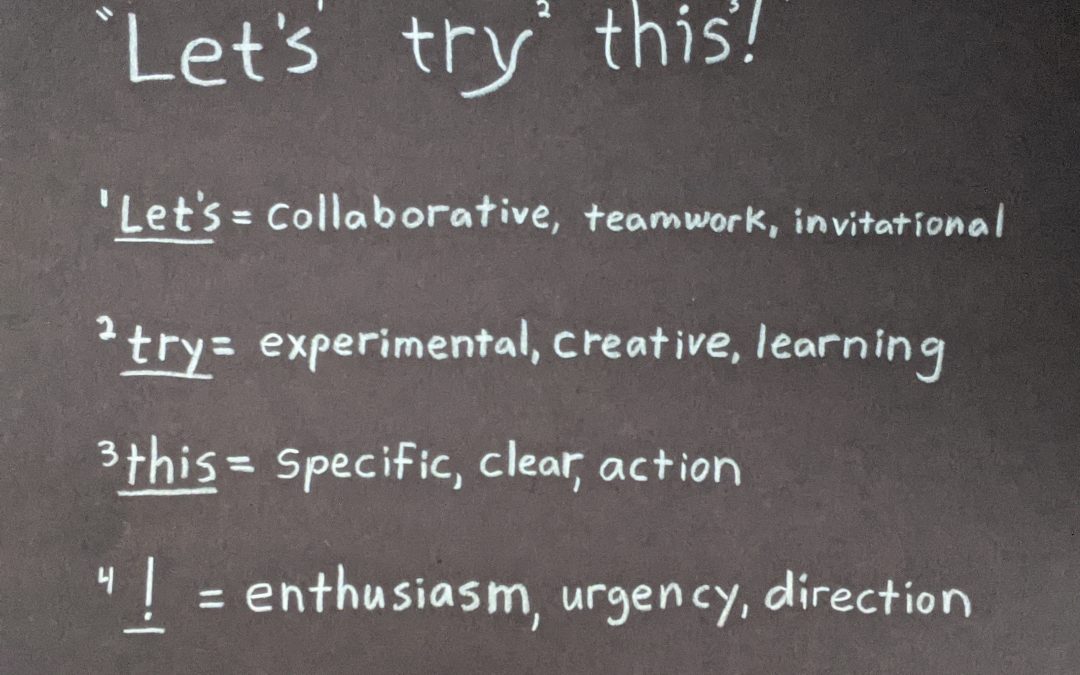

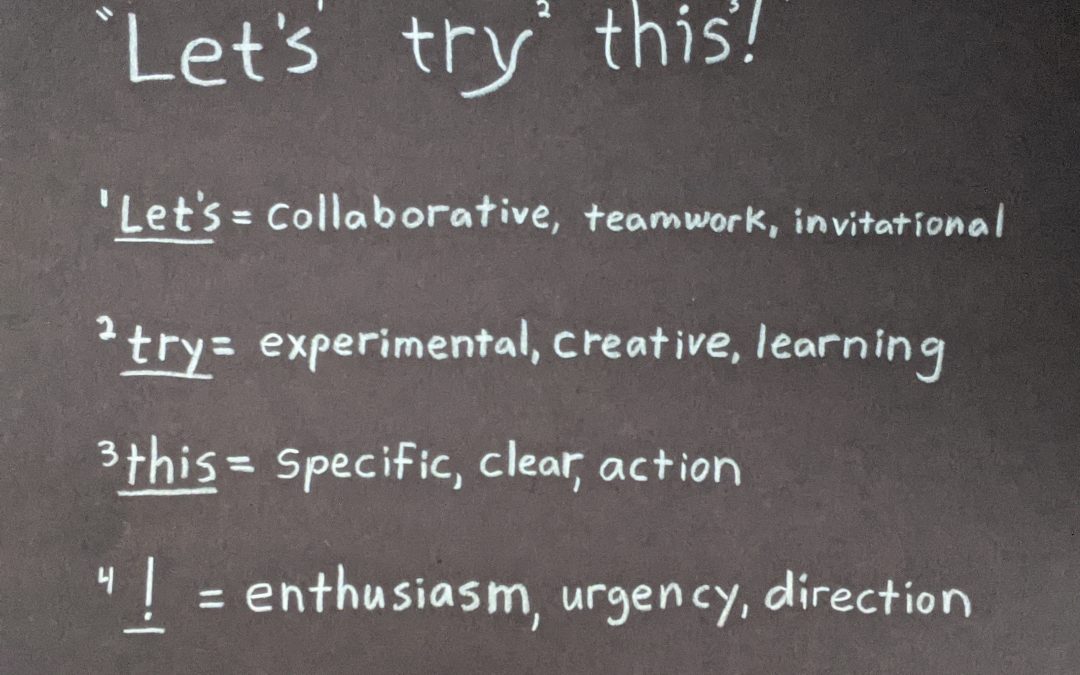

When we’re trying to make change in an organization, starting a new initiative, and generally learning & growing as a professional, my favorite phrase to use is “Let’s Try This!”

If we say it correctly, it’s an inclusive invitation to experiment and learn something specific. Say it more than once and we’re on our way to an iterative series of collaborative experiments.

Please note that pronunciation matters. It’s not pronounced “Let’s ONLY try this” or “You have to try this” or “This will definitely work.” If we use these three words it in an exclusive or controlling way, if it’s directed at YOU instead of US, or if there’s an implication of certainty and predictability, we’re saying it wrong.

And of course, it’s not enough to just say it, we also need to be ready to hear it from our teammates and respond accordingly. And if we really want to crank it up to 11, let’s sprinkle some “Yes-And” pixie dust on it too.

It’s the closest thing to a magic formula I know. I hope you’ll join me in giving it a try (see what I did there?). And remember, it’s pronounced leviOsa, not levioSA.

by dbward | Jan 10, 2022 | Innovation Coaching

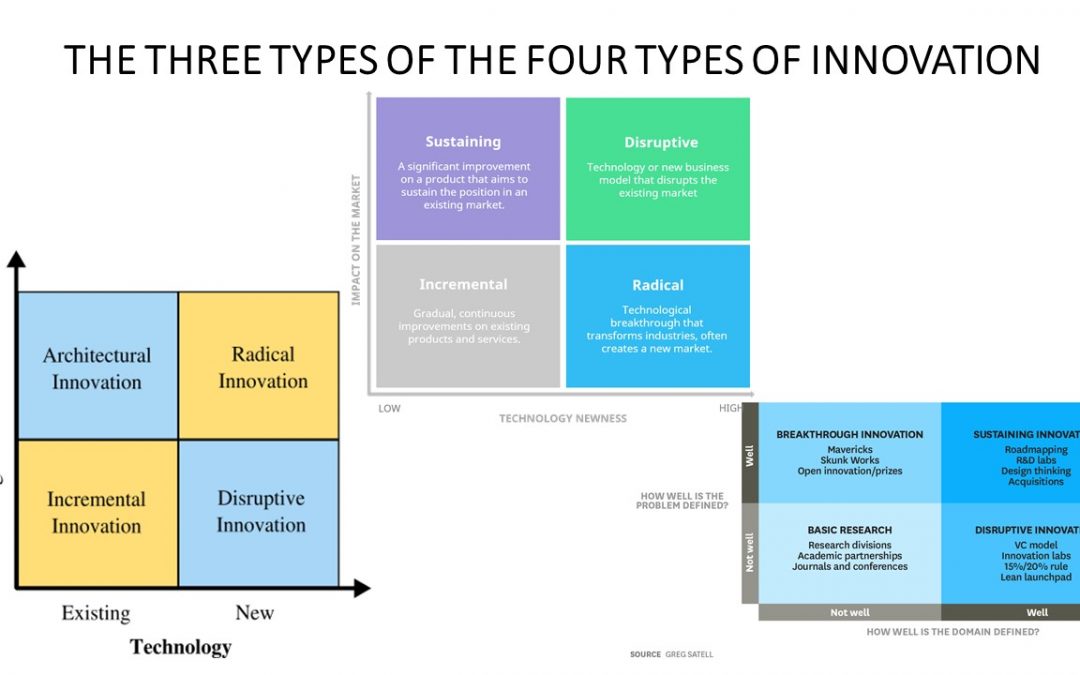

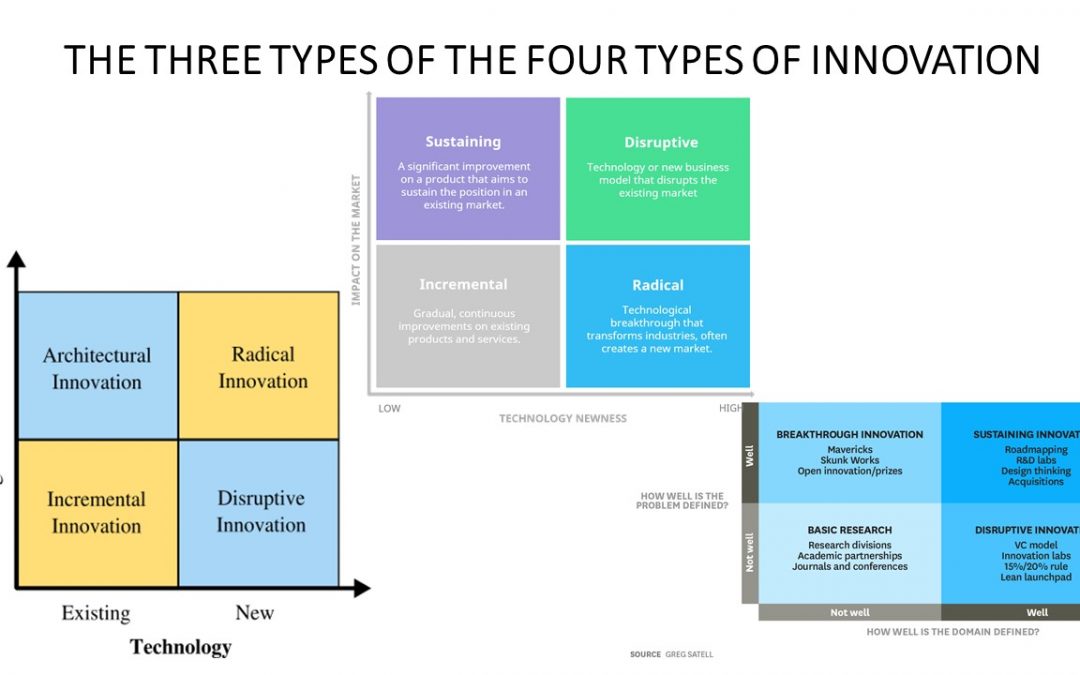

I often point out that innovation is one of those words that gets USED more often than it gets DEFINED. That is true, but it’s also true that innovation gets defined ALL THE DANG TIME, and in lots of interesting and amusing ways.

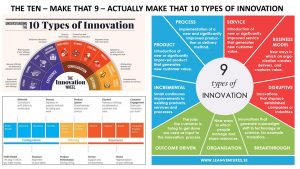

For example, ask your favorite search engine to show you “types of innovation,” and you’ll be treated to a bewildering collection of frameworks, infographics, and diagrams, many of which claim to show THE different types of innovation. The image above shows three types of the four types of innovation, while the image below shows several different ways to arrange the 10 (9?) types of innovation.

It’s ok to have a little laugh about all this. This situation is indeed kinda funny, and can also be a little confusing. Here’s the good news – this situation is also informative and educational if we approach it the right way.

Instead of getting too attached to any particular representation of THE types of innovation, we might adopt a curious posture towards all of them. We may find that each framework illuminates some facet of innovation… and casts a shadow on other facets. We may discover that while each one provides some insight on the topic, each framework also introduces gaps that leaves important aspects unaddressed. Thus, we may benefit by becoming familiar with a whole bunch of them. And when we do that, we might even develop our own framework, our own contribution to the discussion.

As Seth Godin often points out, one hallmark of a professional – in any field – is that he or she does the reading, and is familiar with the latest developments and thinking in their field. So please consider this your personal invitation to do the reading this year. If you want to be a professional in a field that values innovation, you should be able to define innovation and not only discuss the different types, but also the different taxonomies – why and how they differ, the strengths and weaknesses and applications of each.

by dbward | Oct 11, 2021 | Innovation Coaching, Success Stories

Today’s post is co-authored by Bill Donaldson and Lauren Armbruster

ITK Trainee’s Perspective:

The road to becoming a certified Innovation Tool Kit (ITK) member is clear and full of community support-but it can also make the candidates a little nervous! For instance, one requirement is for all facilitators-in-training to run facilitation sessions while being observed by a veteran ITK member. Practice makes perfect…but I don’t think I’m alone in saying that we all wish we could be perfect on the first try! Thankfully, ITK members are trained to think positively and provide constructive feedback-which is exactly what I received from one of my observers, a certified ITK facilitator.

My experience with observation during an ITK practice was simple: I ran a Lotus Blossom brainstorming session as the sole moderator, Mural operator, and facilitator. I thought that the audience was engaged and able to meet the goals we had established in advance, plus have a little fun along the way-but I was looking forward to hearing what a more experienced facilitator might suggest as best practices for the future.

Team Toolkit Member Perspective:

I saw a post asking for someone to be an Observer during a Workshop through our internal channels. Knowing how hard it was for me to get those last items for ITK Certification, I was thrilled to help. “What an opportunity to help her get closer to her ITK certification”, I thought. Never giving it much though how I would actually do this, after all I’m a certificated ITK facilitator.

While in the session taking awesome notes, it came to me “how am I going to give her feedback?” So right after the end I did that ITK thing, improv.

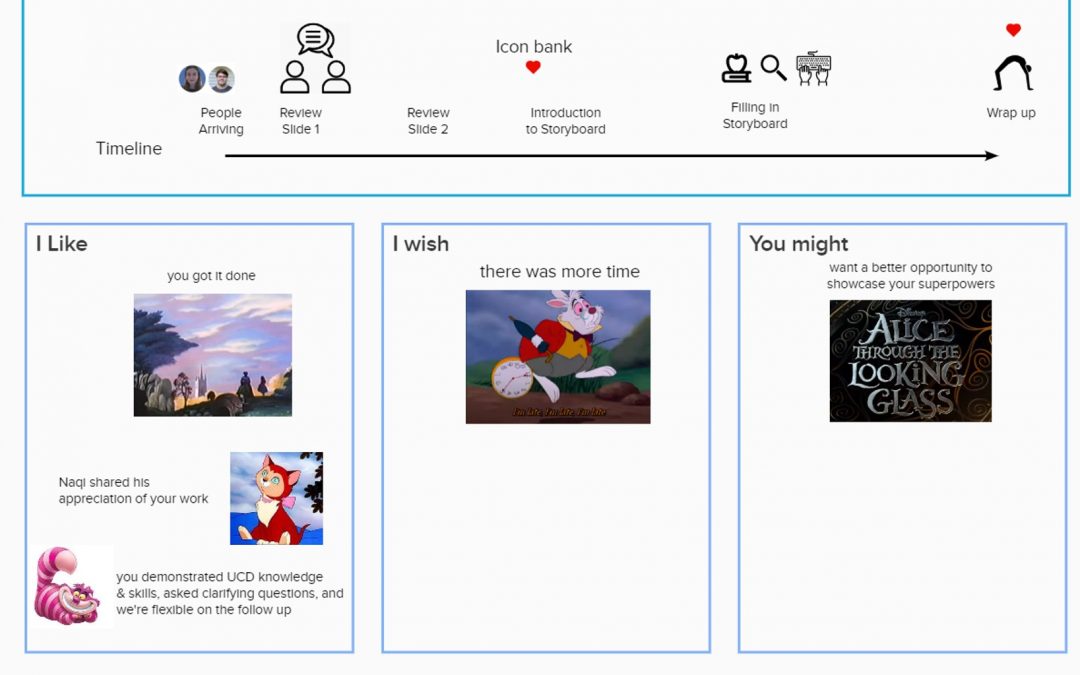

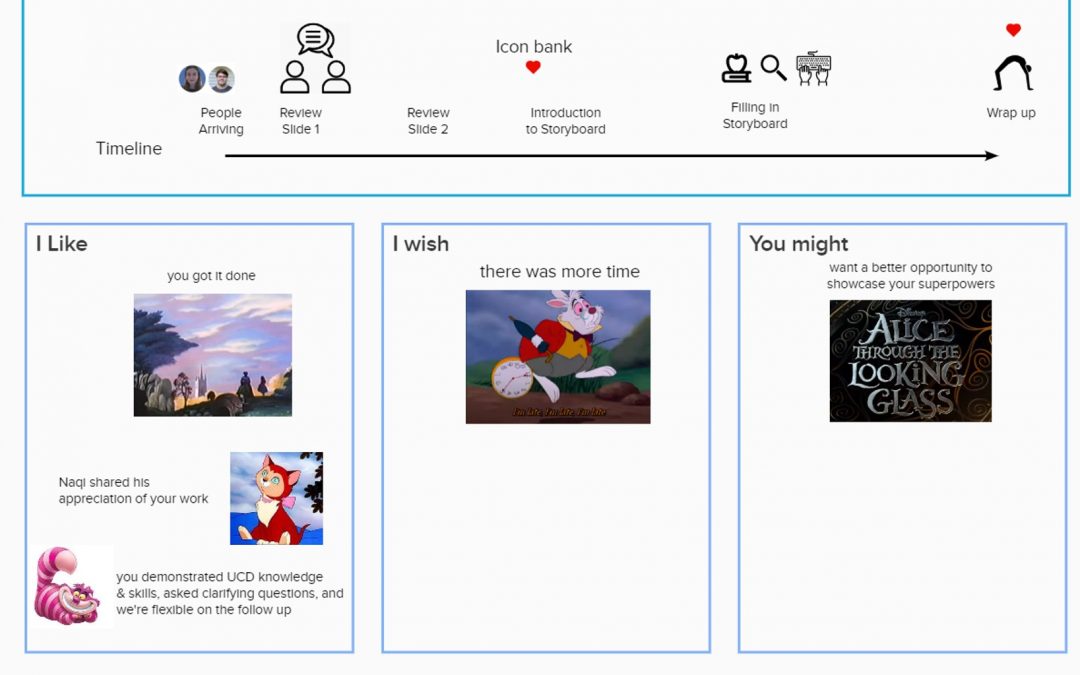

I started with the COIN model, but wanted it to provide more of a critique than feedback. I used the Critque method (“I Like, I Wish”) from an ITK Lunch and Learn. This still didn’t convey what was accomplished by the ITK Trainee in her Workshop, so I then applied the ITK superpower of “AND” adding a User Journey on top like a ‘cherry’ on a sundae.

What is this sundae? At the base three columns: “I Like” and “I Wish” and “I Hope” Using “I” is a lighter way of providing feedback. Making the feedback specific things heard, seen, and felt. The User Journey is a great way to provide how things changed over time for the specific observations. But wait there’s more… all of this can be funner [yes this is a word]. Add icons, pictures, or even use a book or movie theme like to describe the Workshop. Humans are story sponges, our brains are wired to hear and remember stories. So make your observations reflect the story you saw and heard.

The recipe to recreate it…

1) Start with a quick meeting (15 mins) before the Workshop with the ITK Student for introductions and to get any specific areas for feedback. These areas can be used to plot on the User Journey. Make sure to ask how does the person like to get feedback. Even very well done feedback can be a surprise if not expected. Likewise, skipping this can make it hard on you to provide meaningful feedback when things appear to go awry.

2) During the Workshop record Observable events and the Impact on the Workshop (both the flow and the attendees) with the specific Context. These are from the COIN model

3) After do a scan for themes and group into three groups: “I Like” “I Wish” and “I Hope”.

- The “I Like” are that things your liked or even better when someone said they liked it during the Workshop.

- “I Wish” should be gentler then “areas of improvement”. When “you wish” something happened, it’s just that you wish. As the receiver I can grant your wish or not.

- “I Hope” give you the chance to push for something more or bigger that you see the observed person may not. You can make the case “I Hope” is the same as “I Wish” you wouldn’t be wrong. For me it was about the visual weight and appearance of 3:1 positive to negative comments.

4) Let the Story or theme come to you. Then find icons or pictures for observations and decorate the timeline. The entire effort should take under 30 minutes.

5) Meet with the ITK Student to present the Mural and let the Trainee define their Next steps. Start with asking the ITK Student how they thought they did. Use the exact words they gave you in step 1. Then go over the areas that aligned with their self-feedback and where you saw something different.

ITK Trainee Perspective:

I found this to be the easiest feedback session I have ever experienced at MITRE! I knew (somewhat) what to expect from it thanks to our expectations-setting conversation where I could identify specific areas that I was worried about or interested in them paying attention to. The timeline is a visual and intuitive way to track the progress of a full session. For me, this was most helpful to review very soon after I facilitated so I could remember my experience compared to what was observed and see if there were differences-and ask myself why that might be. Beginning with positive reinforcement through the “I Like” was a comfort and built me up before the more constructive “I Wish” and “You Might” feedback-but all of it was ‘light’ and easy to take and talk about thanks to the fun icons, memes, and imagery that were used throughout.

Overall, this form of feedback was easy to understand and a great mix of positive feedback and constructive criticism. It is highly recommended as a model to provide feedback to others within ITK and across MITRE after sponsor briefs, during annual feedback sessions, and beyond.

by dbward | Aug 30, 2021 | Innovation Coaching

This week’s post is from Conor Mahoney, one of our new ITK Facilitator candidates

Every morning when I fire up my laptop, I often take a few minutes to peruse the latest postings on Arxiv, SSRN, and ArsTechnica, to ensure that I stay up with new technological developments and research findings. As a data scientist, a field that is both rather nebulously defined and constantly evolving, this is a task that often leads me chasing new “white rabbits” down their intellectual warrens like Alice from Alice in Wonderland. As I fixate on a new shiny object my thoughts immediately turn to my Sponsor’s programs, like someone working on a jigsaw puzzle with missing pieces, I see this new widget or tool filling in some gap in capability or function and greatly enhancing the end result.

However, successful innovation efforts rarely begin that way, with a technology in search of a use case. After 22 years inside the federal government and military ecosystem, I have yet to see this approach work. Instead, the majority of the successful innovation efforts I have participated in started by deconstructing an existing problem and identifying areas where technological injects or process refinements could alleviate user pain or deliver enhanced results. It can be difficult to resist the siren call of the shiny and new and focus instead on what really delivers value: enhanced capabilities tied to well documented user needs.

To that end I developed a phased approach that focuses teams on realizing and ameliorating problems, using several of the ITK tools. I call it the D4 approach to innovation, and it involves Discovery (identifying mission or capability problems) Deliberation (Identifying what pain points or capability gaps to address based on the perceived value gain), Deconstruction (unwrapping the problem to identify its threads, touchpoints, and relationships) and Development (ideating on solutions). I believe that one of its best value propositions for this approach is its ability to familiarize “outsiders” to a project and the problems looking to be addressed. Bringing in people from outside fields, mission areas, and organizations to look at problems with new perspectives and fresh approaches can often lead to excellent, outside of the box, ideas and it increases the diversity of thought that can be used to attack a problem set.

In terms of ITK tools this process can be thought of roughly as: Define, Understand, Generate, and Evaluate. In the Defining phase I might use Problem Framing to develop and document the problem statement at hand. With a group consensus on a problem statement, I can then Journey Map out the problem against a user or system perspective. As I move to Understand, I might break out the Triz Prism; which flows in sequence because it focuses on a well-defined problem (which I developed over the previous phases) and ends on a specific solution. Finally, during the Evaluate phase I like Rose, Bud, Thorn to identify potential issues with our specified solution or enhancement and to consider potential negative impacts to other processes or related capabilities.

There is, of course, no wrong answer when it comes to tool-chaining across the ITK tool palette. But putting tools end to end that build on one another is a useful approach that provides for continuity of thought and focus and it can help ensure that the innovation session never gets too far off the rails. Thinking about the tools as part of a larger, integrated, process and planning their use beforehand will often also provide “parking lots” for when team members rush towards a solution without defining a problem. I hope you enjoyed reading about my approach, and I hope you have fun innovating out your own.

by dbward | Apr 19, 2021 | Innovation Coaching

Last week’s video addressed the question “What is innovation?” This week, we’re taking a look at a related topic – experimentation.